Member-only story

About Kubernetes’ CNI Plugins

Demystifying the usage of CNI plugins

When setting up a Kubernetes cluster, the installation of a network plugin is mandatory for the cluster to be operational. To keep things simple, the role of a network plugin is to set up the network connectivity so Pods running on different nodes in the cluster can communicate with each other. Depending upon the plugin, different network solutions can be provided: overlay (vxlan, IP-in-IP) or non-overlay.

To simplify the usage of a network plugin, Kubernetes exposes the Container Network Interface (aka CNI) so any network plugin that implements this interface can be used.

Kubernetes also allows the usage of kubenet, a non-CNI network plugin. This one is basic and has a limited set of functionalities.

If we use a managed cluster (there are plenty out there: Amazon EKS, Google GKE, DigitalOcean DOKS, OVH Managed Kubernetes, Scaleway Kapsule) the CNI network plugin has already been selected from among the many existing solutions and installed for us. But if we install our own cluster, we need to select and install the plugin manually. Some external tools, like Rancher, make the process really easy, though.

In this article, we will present CNI and see how a network plugin is installed, configured, and used. We will follow the steps below:

- A quick introduction to Container Network Interface (CNI)

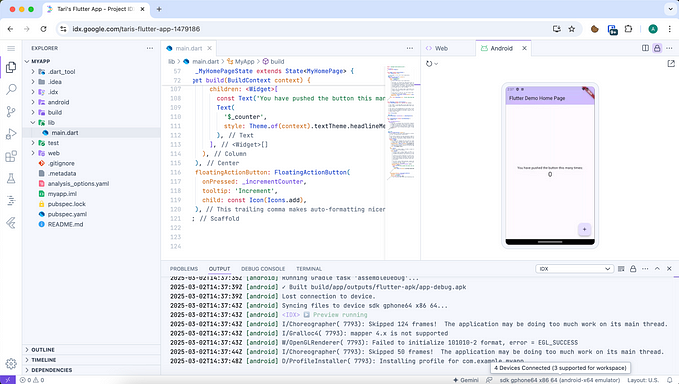

- Set up a k0s cluster with the Calico plugin

- Set up a k0s cluster with the Weave Net plugin

CNI — A Quick Introduction

What is a CNI ?

According to the official definition,

“CNI (Container Network Interface), a Cloud Native Computing Foundation project, consists of a specification and libraries for writing plugins to configure network interfaces in Linux containers, along with a number of supported plugins. CNI concerns itself only with network connectivity of containers and removing allocated resources when the container is deleted.”